The digitization of healthcare will reduce treatment costs while artificial intelligence will change how doctors work – digital health is usually discussed in a future perspective, even though e-health solutions and AI are already present in medicine. Here are the reasons why this is the case and why it is harmful.

Summary:

- Everybody can write about the future of healthcare – no knowledge is needed

- This is often the case in the daily press, industry reports, and social media

- Some of the visions are far from reality and can be misleading

- Futurism is rooted in insufficient scientific evidence about the benefits of digital health

- But it also has good sides – it strengthens digital literacy, stimulates debates

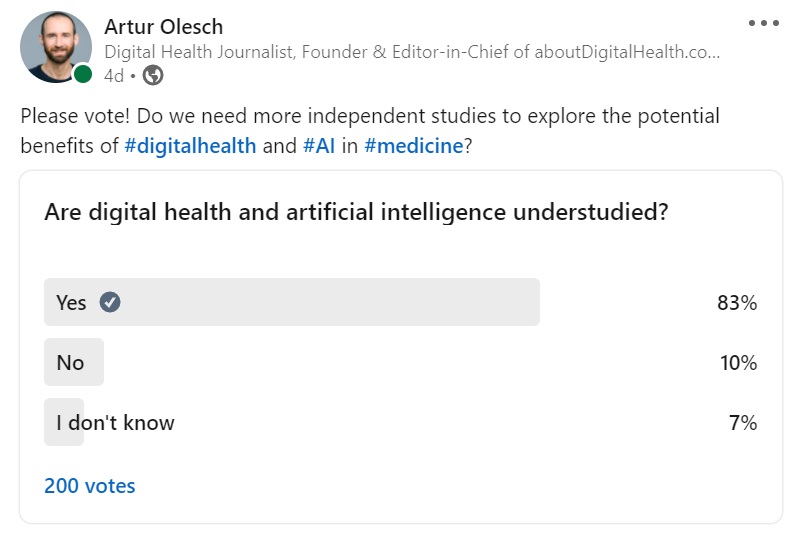

- Digital health remains understudied

- Some study reviews demonstrate that many of the available studies are biased

- The most common biases in research on digital health: confirmation and publication bias

- Another problem is the hermetic nature of scientific language

- We need more evidence in digital health to support strategic decision-making

- Independent studies will allow us to avoid waste of resources

- Otherwise, digital health interventions will be seen like dietary supplements rather than medicines – “they certainly won’t hurt, and they may even help”

- At the same time, we cannot rely solely on classic clinical trials (expensive, time-consuming, sometimes impossible to conduct). For digital health solutions, we need to consider new forms, such as real-world testing, new methods of validation by regulators

The temptation to predict the future of healthcare

Digital health will become the new standard, improve the quality of treatment and preventive medicine, shape a new business model in healthcare, strengthen the patients’ role and democratize the health care system. In addition, artificial intelligence will transform communication between doctors and patients, support clinicians in the decision-making process, significantly affect the development of the health sector, and enable personalized medicine.

It is easy to write about the future of healthcare because you can write anything. There are no rules and you do not need any scientific facts, logic or in-depth knowledge of technology or sociology. All you need is imagination and predictions based on the linearity of evolutionary processes. As if history did not teach us that it is difficult to look for simple links between a candle and a light bulb or a horse and a car.

Notions such as AI and digitization trigger extreme emotions because we are talking about something unknown, something that has not happened yet and which could be a potential threat. And fear is the catalyst of curiosity. It does not matter that we have already read hundreds of times about robots that will replace doctors. Whenever a problem is referred to in the future tense, the dark scenarios return, polarizing people and leading to discussions between the enthusiasts and opponents of digitization.

In the haze of futurism, we forget that digitization is already happening. We all boarded the train headed to the future of healthcare many years ago. Data is entered into the electronic medical records, AI systems help make clinical decisions, COVID-19 vaccination certificates are available on our smartphones, and telemedicine makes it possible to diagnose and treat patients remotely. But this is the everyday reality that we are getting used to—much less exciting than artificial intelligence making mistakes on the operating table (murdering patients).

It is easy to get carried away by creative medical futurism. Every option is possible, and it is difficult to challenge scenarios that might take place in the next 10 or 50 years because nobody can predict the future, regardless of their knowledge or experience.

Medical futurism has its bright sides as well. Coming up with various scenarios allows us to think about what kind of future for healthcare we want. Should artificial intelligence determine the course of treatment on the basis of millions of similar clinical cases, or should it be decided by a doctor who follows his or her experience and intuition? Which is more important, the result or the process of the treatment? To what extent are we willing to give up our privacy, and do we want to share behavioral data in exchange for precise preventive measures?

The hermetic nature of science

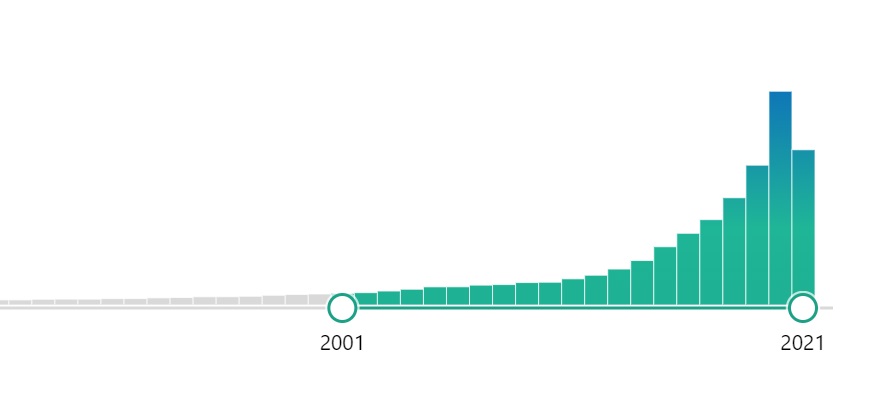

When you enter the phrase “digital health” into PubMed, the largest library of scientific papers in the world, you will get over 36,000 results – studies, analyses and abstracts. Their number is growing quickly: from 683 in 2010 to over ten times more in 2020 – 7,751. Nevertheless, digital health is still relatively rarely studied in comparison to other fields. What is missing is data for analyses, interest in this type of papers and often also funding.

In 2001 there were 267 papers published; in 2020 – 7826 papers.

It isn’t easy to find cause and effect relationships, regardless of whether we are talking about the implementation of an IT system in a hospital and the results/costs of treatment or the application of telehealth solutions and savings in the healthcare system. In order to obtain credible data, it would be necessary to conduct a randomized controlled study by analyzing results in hospitals without electronic health records and with EHRs. In practice, it is impossible.

An example? A paper entitled The Economic Impact of Artificial Intelligence in Health Care: Systematic Review (JMIR Publications) has shown that none of 66 studies describing the impact of AI on health costs contained an analysis that would be considered complete in methodological terms. Initial investment and operational costs of infrastructure and services related to AI were not taken into account in many studies. Moreover, there was no assessment of alternative solutions which would make it possible to achieve a similar impact.

Nevertheless, there are high-quality studies out there. Their growing number is evidenced by The Lancet Digital Health, a journal contributing to promoting digital technologies in health practice. Every month, experts present scientific analyses of e-health solutions, from telecare and wearables to medical devices. However, these are scientific articles written in academic language, not as easy to read as popular science analyses on social media.

For example, an article entitled An integrated nomogram combining deep learning, Prostate Imaging–Reporting and Data System (PI-RADS) scoring, and clinical variables for identification of clinically significant prostate cancer on biparametric MRI: a retrospective multicentre study, based on the examination of 592 patients, shows how the use of ClaD integrated nomogram in risk stratification for patients with prostate cancer may help identify people with prostate cancer who are at a low or very low risk of developing this disease. Such patients may be put under active supervision or participate in preventive programs—a huge measurable benefit for patients confirmed by research. On the other hand, due to the narrow area of expertise, this discovery is only likely to be attractive to a small number of people. An additional obstacle to promoting such news is the hermetic language of scientific papers, but this is a much broader problem.

An attempt to translate the latest findings related to the application of AI in medicine into the language of popular science was made by Eric Topol in his book, Deep Medicine. How Artificial Intelligence Can Make Healthcare Human Again. Topol managed to explain technical issues clearly and interestingly, citing numerous case studies and scientific papers. Not surprisingly, he is an expert often hosted on TV shows and an opinion leader with over 459,000 followers on Twitter. Digital health science needs more such communication bridges between science and an audience with average technological knowledge or digital literacy.

The future-oriented thinking full of bias and clichés

Digital health is in the spotlight recently, especially in the context of COVID-19 and med-tech solutions such as telemedicine and telecare. This visibility in the mass media is positive – every article increases digital literacy and drives cultural transformation in the long term.

Although freestyle storytelling about the future of healthcare is exciting, it’s pure fiction. It doesn’t add much to the substantive discussion. Healthcare futurism – if practiced by people who have no industry expertise – often uses clichés, invokes unfounded prejudices, spreads fear and even facts that are not in line with reality. Take some industry reports predicting optimistic growth in the digital health market, the rapid development of artificial intelligence, or the potential benefits of various digital health technologies. Many of these are marketing or lobbying in nature, yet they become sources of data in a credible context.

But, on the other hand, all of them provide insights into the future, provokes discussions. Separating the wheat from the chaff is very problematic in this case.

I wish more scientific facts and less biased content of various kinds were present in the public debate. I wish there were more independent research in digital health. Why? Because decision-makers need to make decisions based on research data, wisely invest resources in solutions that improve patient outcomes and health care quality.

Don’t get me wrong – in principle, innovation should evolve generically, in the real-world methodology of testing solutions by end users. I don’t mean the “evidence first – then approval” approach but research that explores the benefits and potential of existing tools.

Digital health solutions are not a matter of the future. They can help patients here and now, not “tomorrow” or “maybe.” But their benefits are still under-researched, which leaves plenty of room for pseudo-scientific theories. Without evidence, you may get the impression that digital health is like dietary supplements – they certainly won’t hurt, and they may even help.

I have a small favour to ask…

This website is 100% free of commercials. Please support aboutDigitalHealth.com (€1+). It only takes a minute. Thank you!

€1.00